on Container Networking

Over

lay

Under

lay

The

Lee Calcote | Tony Burke

December 7th, 2016

Show of Hands

Container Networking

...it's complicated.

Preset Expectations

Experience & Management

Reliability & Performance

- same demands and measurements

-

developer-friendly and application-driven

-

simple to use and deploy for developers and operators

better or at least on par with their existing virtualized data center networking

Container Networking Specifications

Very interesting

but no need to actually know these

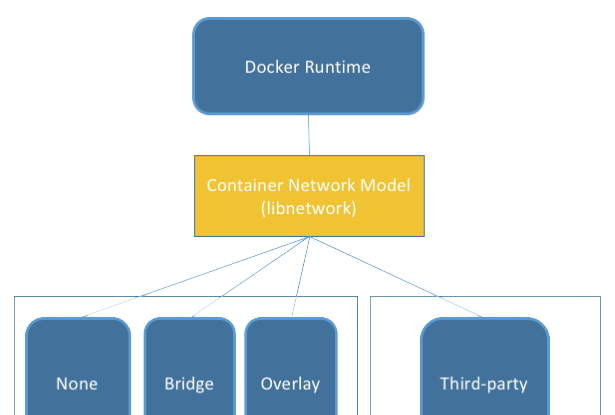

Container Network Model (CNM)

...is a specification proposed by Docker, adopted by projects such as libnetwork

Plugins built by projects such as Weave, Project Calico, Plumgrid and Kuryr.

...is a specification proposed by CoreOS and adopted by projects such as rkt, Kurma, Kubernetes, Cloud Foundry, and Apache Mesos

Plugins created by projects such as Weave, Project Calico, Plumgrid, Midokura and Contiv Networking

Container Networking Specifications

Text

Container Network Model

Specification

Remote Drivers

Local Drivers

Container Network Model

Topology

Network Sandbox

Endpoint

Backend Network

Docker Container

Network Sandbox

Endpoint

Docker Container

Network Sandbox

Endpoint

Docker Container

Endpoint

Frontend Network

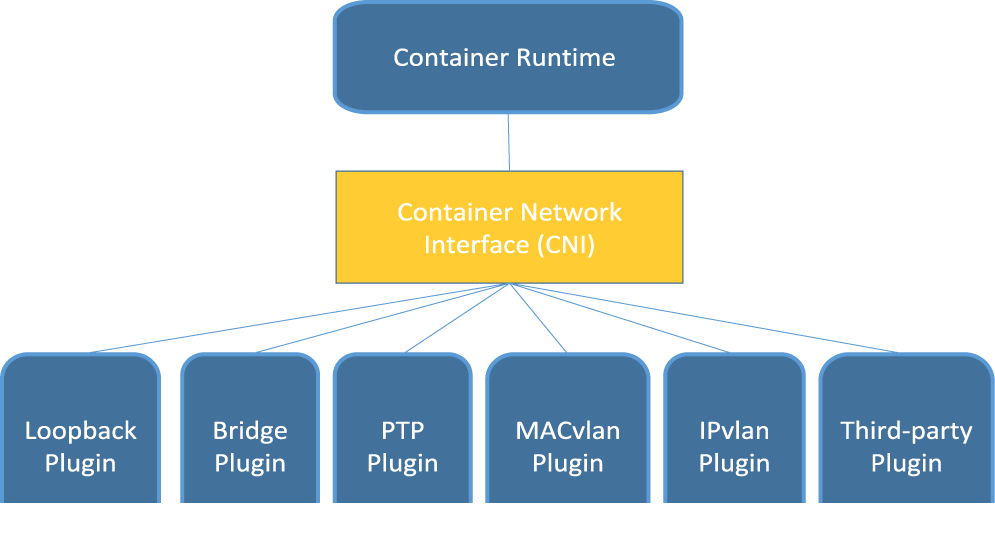

Container Network Interface

(CNI)

Container Network Interface

Flow

-

Container runtime needs to:

-

allocate a network namespace to the container and assign a container ID

-

pass along a number of parameters (CNI config) to network driver.

-

-

Network driver attaches container to a network and then reports the assigned IP address back to the container runtime (via JSON schema)

CNI Network

{

"name": "mynet",

"type": "bridge",

"bridge": "cni0",

"isGateway": true,

"ipMasq": true,

"ipam": {

"type": "host-local",

"subnet": "10.22.0.0/16",

"routes": [

{ "dst": "0.0.0.0/0" }

]

}

(JSON)

CNI and CNM

Similar in that each...

-

...are driver-based, and therefore

- democratize the selection of which type of container networking

-

...allow multiple network drivers to be active and used concurrently

-

each provide a one-to-one mapping of network to that network’s driver

-

-

...allow containers to join one or more networks.

-

...allow the container runtime to launch the network in its own namespace

-

segregate the application/business logic of connecting the container to the network to the network driver.

-

CNI and CNM

Different in that...

-

CNI supports any container runtime

- CNM only support Docker runtime

- CNI is simpler, has adoption beyond its creator

-

CNM acts as a broker for conflict resolution

- CNI is still considering its approach to arbitration

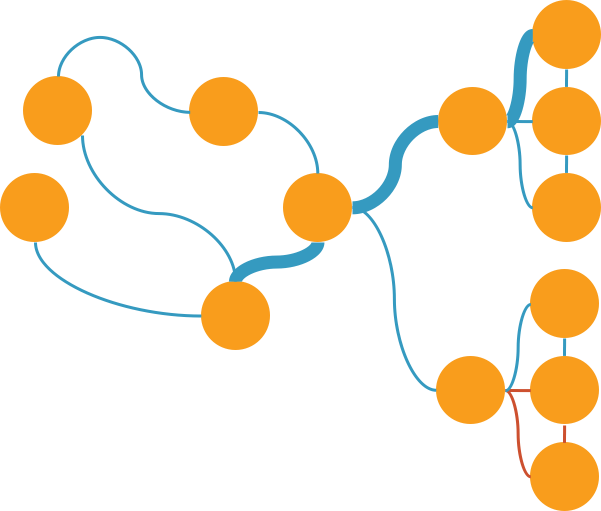

Types of Container Networking

None

Links and Ambassadors

Container-mapped

Bridge

Host

Overlay

Underlay

- MACvlan

- IPvlan

- Direct Routing

Point-to-Point

Fan Networking

None

container receives a network stack, but lacks an external network interface.

it does, however, receive a loopback interface.

Links

- facilitate

single host connectivity

- "discovery" via /etc/hosts or env vars

Ambassadors

- facilitate

multi-host connectivity

- uses a tcp port forwarder (socat)

Web Host

MySQL

Ambassador

PHP

DB Host

PHP

Ambassador

MySQL

link

link

Container-Mapped

one container reuses (maps to) the networking namespace of another container.

may only be invoked when running a docker container (cannot be defined in Dockerfile):

--net=container=some_container_name_or_id

Bridge

Ah, yes, docker0

- default networking for Docker

- uses a host-internal network

- leverages iptables for network address translation (NAT) and port-mapping

Host

container created shares its network namespace with the host

- default Mesos networking mode

- better performance

- easy to understand and troubleshoot

- suffers port conflicts

- secure?

Overlay

use networking tunnels to delivery communication across hosts

- Most useful in hybrid cloud scenarios

- or when shadow IT is needed

- Many tunneling technologies exist

- VXLAN being the most commonly used

- Requires distributed key-value store

K/V Store for Overlay Networking

-

Docker -

-

1.11 requires K/V store

-

built-in as of 1.12 (raft implementation taken from etcd)

-

-

WeaveMesh - does not require K/V store

-

WeaveNet - limited to single network; requires K/V store

-

Flannel - requires K/V store

-

Plumgrid - requires K/V store; built-in and not pluggable

-

Midokura - requires K/V store; built-in and not pluggable

- Calico - requires K/V store

Underlays

expose host interfaces (i.e. the physical network interface at eth0) directly to containers running on the host

- MACvlan

- IPvlan

- Direct Routing

not necessarily public cloud friendly

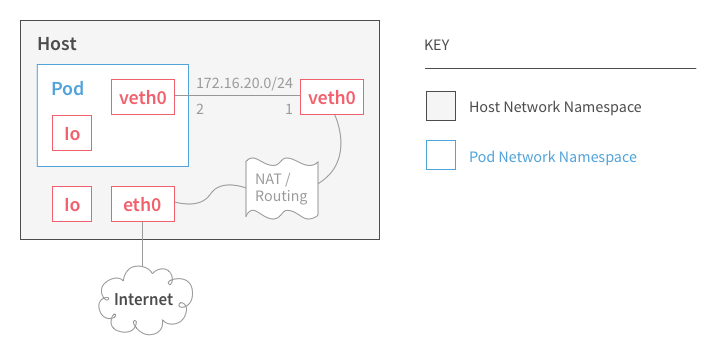

Point-to-Point

- Default rkt networking mode

- Uses NAT (IPMASQ) by default

-

Creates a virtual ethernet pair

- placing one on the host and the other into the container pod

- leverages iptables to provide port-forwarding for inbound traffic to the pod

- internal communication between other containers in the pod over the loopback interface

Internet

MACvlan

-

allows creation of multiple virtual network interfaces behind the host’s single physical interface

-

Each virtual interface has unique MAC and IP addresses assigned

-

with restriction: the IP address needs to be in the same broadcast domain as the physical interface

-

-

eliminates the need for the Linux bridge, NAT and port-mapping

-

allowing you to connect directly to physical interface

-

IPvlan

-

allows creation of multiple virtual network interfaces behind the host’s single physical interface

-

Each virtual interface has unique IP addresses assigned

-

Same MAC address used for all containers

-

-

L2-mode containers must be on same network as host (similar to MACvlan)

-

L3-mode containers must be on different network than host

-

Network advertisement and redistribution into the network still needs to be done.

-

MACvlan and IPvlan

-

While multiple modes of networking are supported on a given host, MACvlan and IPvlan can’t be used on the same physical interface concurrently.

-

ARP and broadcast traffic, the L2 modes of these underlay drivers operate just as a server connected to a switch does by flooding and learning using 802.1d packets

-

IPvlan L3-mode - No multicast or broadcast traffic is allowed in.

-

In short, if you’re used to running trunks down to hosts, L2 mode is for you.

-

If scale is a primary concern, L3 has the potential for massive scale.

Direct Routing

-

Benefits of pushing past L2 to L3

-

resonates with network engineers

-

leverage existing network infrastructure

-

use routing protocols for connectivity; easier to interoperate with existing data center across VMs and bare metal servers

-

Better scaling

-

More granular control over filtering and isolating network traffic

-

Easier traffic engineering for quality of service

-

-

Easier to diagnose network issues

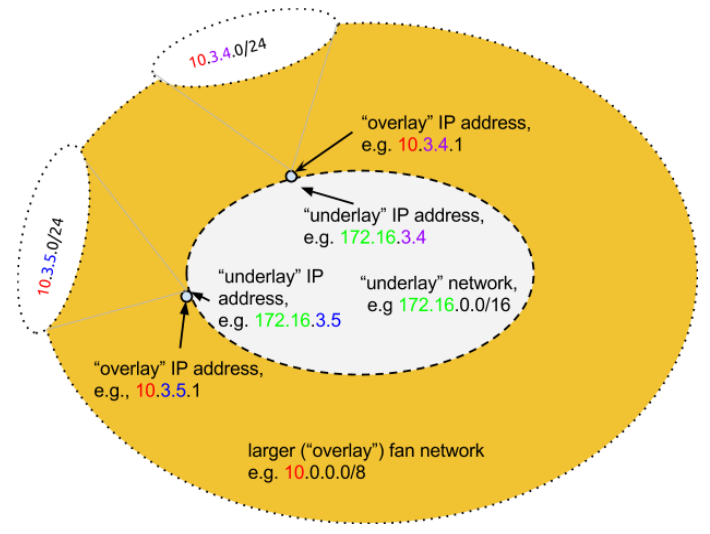

Fan Networking

a way of gaining access to many more IP addresses, expanding from one assigned IP address to 250 more IP addresses

-

“address expansion” - multiplies the number of available IP addresses on the host, providing an extra 253 usable addresses for each host IP

-

Fan addresses are assigned as subnets on a virtual bridge on the host,

-

IP addresses are mathematically mapped between networks

-

-

uses IP-in-IP tunneling; high performance

-

particularly useful when running containers in a public cloud

-

where a single IP address is assigned to a host and spinning up additional networks is prohibitive or running another load-balancer instance is costly

-

Fan Networking

Network Capabilities and Services

IPAM, multicast, broadcast, IPv6, load-balancing, service discovery, policy, quality of service, advanced filtering and performance are all additional considerations to account for when selecting networking that fits your needs.

IPv6 and IPAM

IPv6

-

lack of support for IPv6 in the top public clouds

- reinforces the need for other networking types (overlays and fan networking)

- some tier 2 public cloud providers offer support for IPv6

IPAM

- most container runtime engines default to host-local for assigning addresses to containers as they are connected to networks.

- Host-local IPAM involves defining a fixed block of IP addresses to be selected.

- DCHP is universally supported across the container networking projects.

- CNM and CNI both have IPAM built-in and plugin frameworks for integration with IPAM systems

Text

Docker 1.12 (Load-balancing)

Technology Strategy Project

Container Network Performance

GTM

-

Internal tool

- harden deployment

-

Hosted tool

- validate value of functionality / educational tutorial

- customer telemetry / engagement

-

Free tool

- project announcement article / publish reports

- customers upload reports / get netdex

-

Open source project

- Upsell to NPM / SAM / Container Monitor

Success

-

Credibility within cloud native ecosystem

- imprint on open source community

-

Market and capability validation

- prior to insertion into core product

- # of new to franchise

Purpose

- Capture mindshare within emerging market

- Gain insight into customer container infra

- Ascertain market viability

-

Build internal know-how

- Position for inclusion in offerings

Functions / Benefit

-

Network type capacity check

- Education and decision facilitation

-

Network type capacity comparison

- Comparative “netdex” report

- Performance report - as an industry standard reference

- Visibility of container network flow size and direction

Container Network Performance

See additional research.

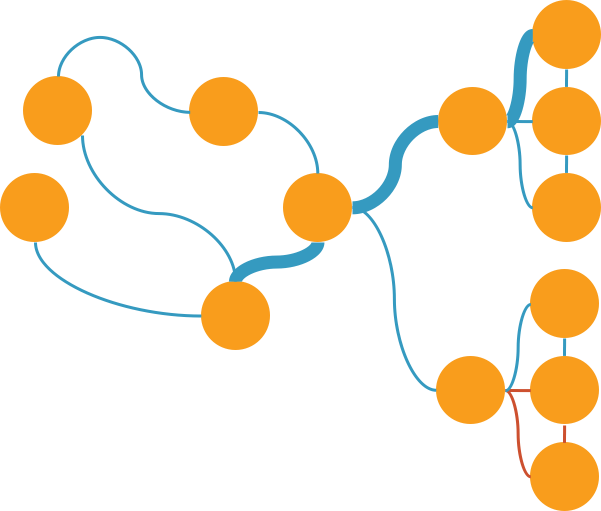

Container Networking Projects

- Nearly all are open source

- Use a variety of technologies

- VXLAN

- UDP Overlay

- L3

- with or w/o TLS

- BGP

- IP routed